- Takeaways

- Virtual influencers: A New Force in Brand Strategy

- AI Influencer Transparency

- Can AI Influencers Ever Be Authentic?

- Ethical Questions Regarding AI Influencers

- Impact on Creators, Diversity, and the Economy

- The Regulatory Horizon

- Who's Responsible for AI Influencer Ethics?

- How to Future-Proof Your Strategy?

- Ethics as a Strategy, Not Constraint

- Final Words

- FAQs

Takeaways

- Transparency is key to AI influencer ethics. It requires open disclosure of the artificial nature of virtual influencers from the start to maintain audience trust and prevent feelings of deception or betrayal.

- Issues regarding virtual influencer Ethics are addressed by embedding transparency into the persona narrative and using hybrid models with human oversight to maintain accountability and support real creators.

- To safeguard their AI influencer ethics journey, brands should weave AI influencer transparency into the persona’s core story.

Could a single digital face, flawless, tireless, and scripted to perfection, quietly rewrite how millions connect, shop, and see themselves?

That is the quiet power virtual influencers now hold in marketing today, raising key considerations around AI influencer ethics. Millions of users now follow them on TikTok, Instagram, and YouTube. These characters now command attention, spark loyalty, and drive revenue without the messiness of real human lives.

Yet the very control that makes them attractive also opens serious questions: When everything feels real but nothing truly is, how do we handle transparency, and what lasting changes does it bring to society?

I’m going to examine those tensions around virtual influencer ethics more closely. The current pillar is a ready-to-use ethical playbook. It equips marketing leaders with fresh viewpoints, practical solutions, and character-first strategies that turn ethical awareness into durable business growth.

Keep reading to view things through the lens of smart, sustainable marketing.

Virtual Influencers Have Become a New Force in 2026 Brand Strategy

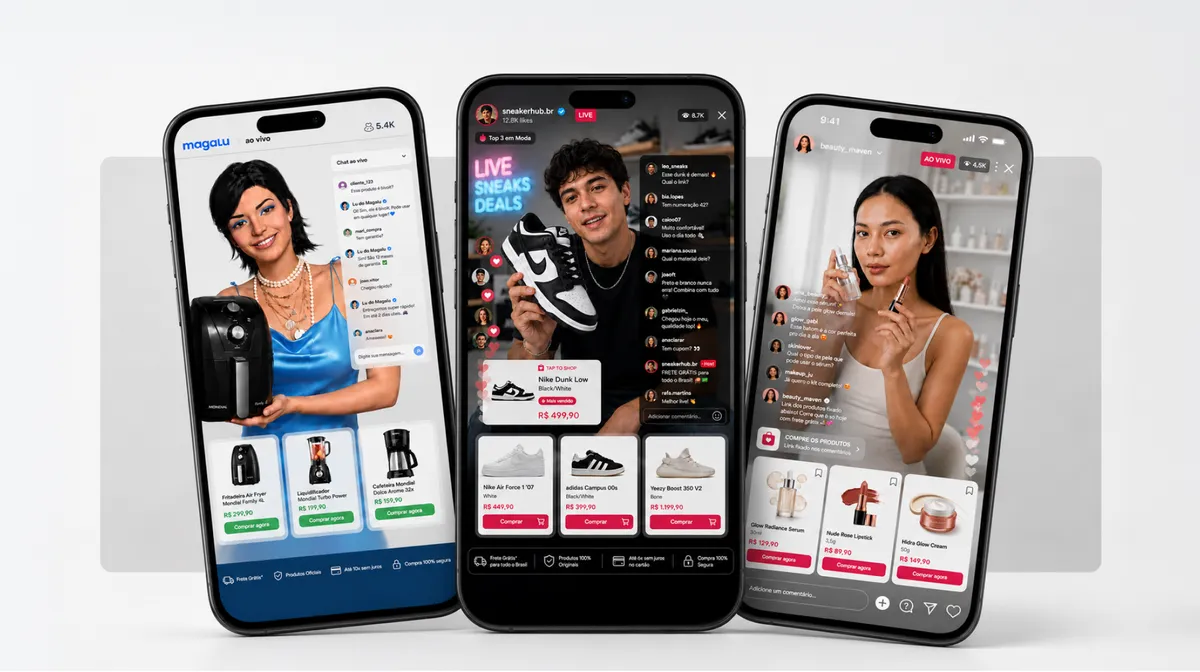

AI influencers are synthetic personas built with advanced imaging, generative tools, and language models. They post, reply, endorse, and build communities exactly like human creators.

Yet they never tire, age, or step off-script. Pioneers such as Lil Miquela and Shudu Gram have shown that the model works. They deliver consistent output, zero scandal risk, and total narrative command.

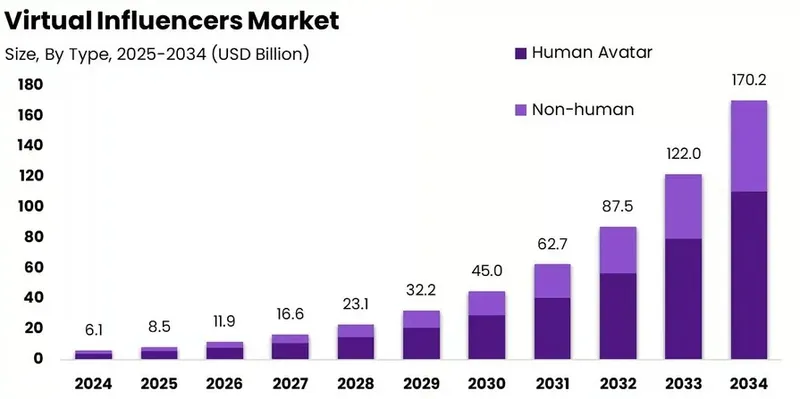

Do people follow virtual influencers? The answer is yes, the business case stands strong. The global AI influencer market hit $6.95 billion in 2024, while the broader influencer marketing sector reached $6.1 billion and heads toward $170.86 billion by 2034.

Some campaigns report engagement rates three times higher than human-led efforts in targeted niches. Costs can fall to a fraction of traditional fees, with round-the-clock availability and instant iteration based on performance data.

For character-based marketing, this means teams design personas aligned perfectly with brand values from day one. No talent scouting, no contract drama, just engineered consistency that scales globally.

Still, the appeal carries weight. Early excitement around control has met growing skepticism. Some adults have even heard of AI influencers, and many don’t understand how they operate.

This awareness gap signals an opportunity for brands that lead with clarity rather than hide behind the pixels.

AI Influencer Transparency: The Line Between Story and Deception

Transparency is the gateway to ethical integrity. Transparency tests every AI influencer strategy. Audiences form real emotional bonds with these characters. When the synthetic nature stays hidden, those bonds can feel like betrayal once revealed. Research shows many users still miss the artificial cues even with basic labels.

For marketing teams, weak transparency does more than hurt one post. It chips at the trust that powers long-term advocacy and repeat sales. Regulators have moved quickly.

The U.S. Federal Trade Commission now requires clear, conspicuous disclosures for AI-generated influencers, treating them like paid endorsements. Non-compliance risks fines up to $51,744 per violation.

In Europe, the EU AI Act’s Article 50 demands labeling for synthetic content that could mislead, especially in high-stakes areas like endorsements or emotion-influencing material.

Take Care of Trust, Engagement Takes Care of Itself!

Imagine a beauty brand launches a virtual ambassador sharing “daily struggles” with skin confidence that mirror customer pain points. Early engagement wows the owner. Yet when followers later learn the struggles were coded fiction, comments sour, and loyalty slips.

The solution? It’s simple. Brands should instead weave transparency into the persona’s core story. Call the figure a creative digital companion or artistic experiment. That keeps audiences engaged because respect replaces surprise.

A 2025 systematic literature review of 51 studies confirms the pattern. Perceived authenticity drives trust, while the uncanny valley effect (that faint unease when something looks almost human) fades faster with upfront disclosure.

The review introduces the Virtual Influencer Trust and Engagement Model (VITEM), which shows how disclosure transparency, cultural context, and authenticity interact through trust as the central mediator.

In character-centered marketing, transparency stops being a legal checkbox. It becomes a distinctive trait that sets your digital persona apart as intentional art rather than imitation.

Can AI Influencers Ever Be Authentic?

In discussions of virtual influencer ethics, the above question often surfaces. When brands commit to virtual influencer transparency, the answer is yes.

Authenticity emerges not from pretending to be human but from openly embracing a digital identity rooted in consistent values, creative storytelling, and honest disclosure. This approach builds real emotional connections based on clarity rather than illusion.

That turns potential skepticism into deeper loyalty while aligning fully with responsible AI influencer ethics.

Ethical Questions Regarding AI Influencers: How They Reshape Norms and Expectations?

They Quietly Influence Beauty Standards

So do behavior models and what counts as a genuine connection.

AI influencers shape more than sales funnels. Their flawless images set bars no real person can reach, amplifying pressures already present on social platforms.

Consider Shudu Gram, a virtual Black high-fashion model created by a white artist. Commercial wins followed. See campaigns with Balmain, Ellesse, and Fenty Beauty.

But so did the sharp debate about appropriation. Simulated diversity-filled screens while real models from those communities saw fewer opportunities.

Lil Miquela’s scripted storyline involving trauma drew public criticism from singer Kehlani, who called out the discomfort of a non-human entity borrowing real pain for engagement. These moments reveal broader risks.

They Create Unrealistic Expectations

Optimized perfection without vulnerability can shift audience expectations for human relationships

Data already link idealized imagery to higher anxiety and lower self-esteem. AI versions intensify the dynamic by removing even the slight imperfections that edited human photos retain.

Representation stats underline the issue: only 19% of people featured in ads come from minority groups, and individuals with disabilities appear in less than 2% of media images despite making up 20% of the population.

Global Brands Face Added Complexity

Cultural responses differ sharply. Some audiences treat the novelty as playful fiction; others see manipulation when emotional cues feel too real. Marketing strategy must map these variations rather than roll out one template worldwide.

The Human Costs: Impact on Creators, Diversity, and the Economy

AI influencers do not operate in a vacuum. They reshape the creator economy itself.

When brands shift budgets to synthetic talent, real human influencers lose opportunities, especially emerging voices from underrepresented groups. Critics note that simulated diversity can replace genuine inclusion rather than expand it.

Organizational Readiness Also Lags

Sixty percent of marketing teams lack AI influencer ethics guidelines. Seventy-five percent have no clear roadmap. This gap risks unintended bias baked into training data, which perpetuates the very underrepresentation stats mentioned earlier.

From a growth perspective, brands that diversify both human and virtual voices create richer ecosystems. They avoid backlash and tap wider talent pools. Character-based strategy here means using AI to amplify, not displace, real creators through hybrid campaigns that showcase both.

Psychological Impact is Unavoidable

Psychological depths include parasocial bonds, the uncanny valley, and emotional transparency.

Audiences do not just follow AI influencers. Many form one-sided relationships that feel deeply personal. Research shows people treat these characters as human enough to build emotional investment, yet the illusion carries risks.

The uncanny valley effect creates subtle discomfort that erodes trust over time. New concepts like “emotional transparency” (the need for synthetic agents to disclose that feelings are simulated) emerge as ethical imperatives.

A 2025 cross-cultural study introduces “synthetic misrecognition” as the relational risk when audiences mistake programmed consistency for genuine reciprocity. For marketing, this means tracking not only likes but also qualitative sentiment after disclosure moments.

Brands that openly address the simulated nature of emotions build deeper, more honest connections. They turn potential unease into shared understanding and stronger loyalty.

The Character-Based Path Forward?

Straightforward answer:

Design personas that proudly lean into their digital identity, as visionary guides or creative storytellers, instead of mimicking human flaws.

This approach respects intelligence, protects reputation, and positions the brand as thoughtful in an increasingly aware market.

The Regulatory Horizon: How to Navigate Compliance in a Shifting Landscape?

Rules have caught up fast, turning transparency from nice-to-have into non-negotiable. The EU AI Act requires clear labeling for generative content that risks deception, with phased enforcement already underway.

The FTC’s updated Endorsement Guides treat AI influencers like any paid relationship: disclosure must be clear and conspicuous, not buried in fine print or quick video flashes.

For brands, compliance becomes a strategic advantage. Proactive disclosure actually boosts trust by 96% according to 2024 research. Yet 94% of consumers now expect every piece of AI-generated content to carry a label.

Non-disclosure creates legal exposure and reputational damage. Smart teams build disclosure into the persona’s narrative from launch. They frame it as part of the creative experiment rather than an afterthought.

Cross-border operations add layers. What satisfies U.S. rules may need extra cultural framing in Europe or Asia. Character-driven marketing here means auditing every campaign against local expectations while maintaining one coherent digital identity.

Early movers who treat regulation as a creative brief, not a burden, gain first-mover trust in regulated markets.

Accountability in the Age of Autonomous AI Influencers: Who’s Responsible?

Today, most virtual influencers still have human teams behind the curtain. Tomorrow changes that. Fully autonomous AI-driven characters could chat individually with millions, adapt in real time, and even generate their own storylines. The question grows urgent: if something goes wrong, who answers?

Scholar Nadine Walter highlights the exact dilemma. When a virtual influencer spreads misleading advice, trivializes trauma, or triggers widespread distress, responsibility could fall on the brand, the developers, the platform, or evaporate into “it’s just code.” No clear legal precedent exists yet for autonomous cases.

For marketing leaders, this demands proactive control. Build accountability into character design by setting strict guardrails on topics, tone, and claims. Document every decision layer.

Hybrid models (AI-assisted but human-overseen) offer a practical bridge while full autonomy arrives. Brands that lead on visible responsibility turn potential liability into proof of ethical leadership, strengthening long-term consumer faith.

How to Future-Proof Your Strategy?

Overall, the horizon looks dynamic. Market projections show virtual influencers claiming up to 30% of influencer budgets by 2026, with expansion into finance, education, and healthcare.

Metaverse integration will let characters interact in immersive worlds, creating new engagement layers. Non-human avatars may appeal especially to socially anxious audiences seeking judgment-free connection.

Yet challenges remain: regulatory tightening, cultural nuance demands, and the need for authentic representation that respects lived experience.

Brands that invest now in ethical frameworks and hybrid models will lead when autonomy scales. The character-based edge lies in designing digital personas that evolve thoughtfully alongside audience expectations rather than chasing every trend.

Treat AI Influencers Ethics as Strategy, Not Constraint

Mastering the ethics of AI influencer marketing takes time; it’s not something you can achieve overnight. Still, taking the right initial steps can transform thoughtful practices into a competitive advantage. The key is to begin.

Here’s a quick and helpful guide to ensure a safe and successful virtual influencer journey.

Step 1: Audit Your Persona’s Real Value

Start here by honestly reviewing whether your AI influencer brings fresh creative depth or is simply a quick way to cut costs.

Ask yourself if it truly respects your audience’s intelligence and aligns beautifully with strong virtual influencer ethics. This foundational check ensures every persona you build adds meaningful value right from the beginning.

Step 2: Make Transparency Part of the Story

Move beyond basic legal requirements and naturally weave AI influencer transparency into the heart of your persona’s story from day one.

Present your digital character as a creative companion or artistic vision; something audiences can embrace with excitement instead of surprise.

Step 3: Build a Strong Image of Accountable Leadership

Create clear, thoughtful rules around topics, claims, and how issues get handled so you stay ahead of the curve. Plan now for a more autonomous future by documenting decisions and keeping human oversight in place.

This smart step protects your brand while showing the world you take AI influencer ethics and virtual influencer ethics seriously. That turns potential risks into powerful proof of responsible leadership that audiences respect and remember.

Step 4: Measure What Truly Matters

Look beyond likes and clicks to track trust, sentiment, retention, and representation with the same care you give traditional metrics. Pay close attention to how people feel about your disclosure and authenticity. These insights give you the full picture.

Step 5: Pair AI with Human Voice

Bring human creators into the mix and involve diverse voices at every stage of design. This approach reduces bias and creates richer, more inclusive campaigns that honor virtual influencer ethics.

When you blend synthetic talent with real human perspectives, you spark broader appeal, avoid backlash, and build ecosystems where everyone (brands, creators, and audiences) benefits.

Step 6: Stress-Test and Keep Improving

Run quick perception tests with real audiences and review results against key factors like authenticity, disclosure, and culture. Adjust your content based on what you learn so your strategy stays fresh and responsive.

This ongoing process helps your AI influencers evolve naturally and maintain the trust that fuels long-term success.

Step 7: Lead with Bold Vision

Finally! It’s time to position your AI influencer as an inspiring digital companion that celebrates creativity and possibility while deeply honoring real human connection. Such a visionary mindset shapes a brand story that feels both forward-thinking and truly human.

To sum up, treat ethics as a strategy, not a constraint. They build resilience that survives scrutiny and fuels sustainable expansion.

Want to make your brand stand out in a fresh and exciting way? A virtual influencer could be the game-changer you’re looking for.

If you’re ready to create a digital character that connects with your audience, we’re here to help.

Get in touch today to start building a virtual influencer that truly speaks to your fans.

Learn more

Long Story Short, Lead with Character, Leave Lasting Impact

AI influencers mark a decisive shift in how brands build influence and community.

Transparency evolves from a compliance task to a foundation of trust. Societal effects stretch far beyond single campaigns to shape norms of beauty, connection, and authenticity.

Regulatory demands, accountability questions, human costs, psychological realities, and future trends all converge here in the broader context of AI influencer ethics and virtual influencer transparency.

Leaders who analyze these layers with confidence and creative vision gain the edge. They design characters that own their digital nature, disclose openly, measure success through both performance and ethical strength, and blend AI precision with human depth. Hybrid approaches show the clearest path.

Consider your next campaign.

Will you chase short-term control and risk shallow bonds, or will you shape AI characters that spark creativity while respecting the people who meet them?

The choice shapes campaign results and the digital culture your brand helps create.

FAQs

Q: Why is transparency important for virtual influencer ethics?

A: Transparency prevents audience feelings of betrayal when users discover the synthetic nature of the persona.

In addition, clear disclosure from the start maintains emotional connections based on honesty. That’s absolutely essential to supporting long-term trust and loyalty.

Q: What regulations apply to virtual influencers in the US?

A: In the US, the Federal Trade Commission (FTC) has some clear rules. They say that AI-generated influencers must make their endorsements obvious, just like paid human influencers do. Otherwise, they could face fines.

The disclosures need to be easy to see, not buried in captions or hashtags.

Q: What does the EU AI Act say about virtual influencers?

A: The EU AI Act has a specific point in Article 50. It says that synthetic and misleading content must be labeled. This is especially important for endorsements or any content that’s made to influence emotions.

Q: Who is responsible if a virtual influencer causes harm?

A: The digital persona itself has no legal personhood. Responsibility typically falls on the brand, developers, or human oversight team behind the virtual being.

Q: Does the violence of virtual influencer ethics harm real creators or diversity efforts?

A: Yes, it can. Brands may shift budgets to synthetic talent. That reduces opportunities for human creators, particularly from underrepresented groups.

Q: What strategies can be employed to address concerns about job displacement from virtual influencers?

A: Hybrid campaigns (human talents + virtual talents) help mitigate displacement while expanding inclusive representation.

Q: How should brands disclose the use of virtual influencers?

As a brand, you should integrate disclosure directly into your digital talent’s core story. For example, you can describe the figure as a digital companion or artistic creation.

Being honest with your audience turns transparency into a distinctive, trust-building element.